Current trends in machine learning.

Here’s an overview of ML techniques and directions to watch in the coming years.

Unleash the hidden potential of your business

Human learning is commonly understood as the long-term change in mental representations and behavior due to experience. Machine Learning (ML) is a branch of Artificial Intelligence and computer science that uses algorithms and data to imitate human learning, gradually becoming better and better.

Although the term ML may sound like something recent, it was first coined back in 1959. At first ML was used strictly in academia but its spectacular rise can be attributed to the emergence of ever-evolving computational power and business use cases i.e. email SPAM detectors in the 1990s.

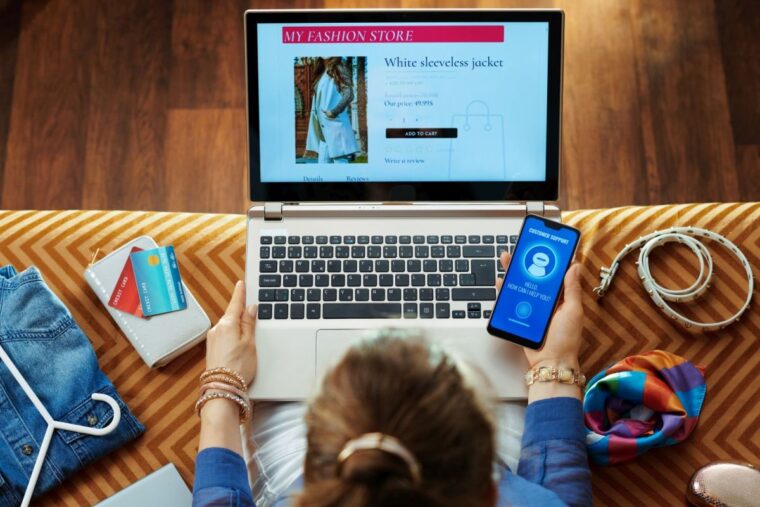

The popularity of ML applications in business rose steeply in the second decade of the 21st century and proved to be effective during the COVID-19 pandemic for various sectors. Real-time chatbot agents, recommendation engines, Customer churn modeling, Fraud detection are just some real world applications.

Let’s discuss your idea further.

Developing ML solutions requires a high level of technical expertise. At DAC.Digital, we strive to always stay on top the game through constant research and development (R&D). Our company is born out of several R&D projects and has developed into a leading service provider of cutting-edge business solutions.

What separates us from others? Our principles and commitment to them, experience with a range of technology stacks, the unique deployment process, transparent communication practices, high business acumen, and our illustrious clientele.

We believe in long-term collaboration, which yields growth for both sides as well as offer free workshops of discovery conducted by our in-house experts. Moreover, aid in identifying appropriate and not over-engineered solutions and bring clarity to cluttered roadmaps.

Buzz words such as Machine Learning and Artificial Intelligence have recently gained momentum in the business world. For businesses looking to deploy such emerging technologies to gain an advantage, it is imperative not to treat them as a supplement but as an integral part of the business processes. This is the same as a good doctor would suggest taking a balanced and nutritious diet instead of supplements. Our team’s main principle is to develop holistic solutions and NOT cut corners, making a vital difference for our clients to achieve their goals.

50

91.5

Here’s an overview of ML techniques and directions to watch in the coming years.

Low-code development methods enable faster app delivery, requiring little to no coding. Low-code development platforms provide a coding setting with a more accessible and more intuitive visual interface. It lowers the difficulty of app development and makes the technology accessible to broader audiences.

No-code method, on the other hand, requires no knowledge of coding. No-code frameworks allow people outside the IT industry to implement software with zero code knowledge. Based on a visual interface, these tools employ drag-and-drop blocks and other elements for creating apps.

In machine learning, these methods would allow programming applications with reduced or eliminated lengthy preprocessing processes, creating models, training, and deploying these models. These emerging technology trends offer speed and flexibility that save time and resources.

Typically, engineers use a set of input data for training in ML algorithms or models. However, raw data may not always be in a form applicable to all algorithms. Experts may have to apply data pre-processing and feature engineering methods. Afterwards, they must perform algorithm selection and hyperparameter optimization to enhance the model’s predictive performance.

AutoML is critical in automating machine learning, including more challenging tasks in improving the accuracy of models, algorithms, feature sets and hyperparameters. Automated machine learning is a solution that strives to automate the functions of applying machine learning to real-world problems. It was proposed as an AI-based solution to the growing challenge of using machine learning.

The high degree of automation in AutoML helps less experienced experts to use machine learning models and techniques and dedicated engineers to save time and resources on some tasks. Additionally, automating end-to-end ML application processes enables quicker building more straightforward solutions and efficient models.

Natural language processing allows algorithms and machine learning models to recognise and understand human speech, meanings and intentions behind the words. In essence, NLP is about understanding, interpreting and mimicking the complexity of a natural spoken language.

NLP breaks down speech based on text or sound data sets into fragments. This way, it can analyse and understand the in-context grammatical structure of sentences and the meaning of words. There are two approaches to this method:

The rule-based approach is an early approach to NLP algorithm development. It uses grammatical rules created by linguistic experts.

In the machine learning approach, algorithms learn and perform the tasks automatically, using provided data sets without manual rules. We can divide it into the following methods:

Statistical

a statistical language model learns the probability of words occurring in the sequence based on text samples. Simpler models work on short sequences of words, while more complex ones work on sentences and paragraphs.

Neural

NLP algorithms use artificial neural networks to analyse and interpret language data. Neural networks can understand and simulate human language, allowing machines to predict words and address topics not part of the learning process.

Statistical and neural approaches can be combined to create a more effective machine-learning model for language processing. Hybrid models can use both methods for better performance on complex tasks. It can also leverage the diversity of predictions and reduce the risk of overfitting.

Learning-based NLP systems using Convolutional Neural Networks and Recurrent Neural Networks learn as they go, extracting increasingly complex and accurate meanings from raw, unstructured data. Doing so requires vast amounts of data, highlighting the importance of big data in NLP.

Multimodal NLP models combine information from different sources such as text, image, audio, and video for a more extensive and accurate understanding of the data. There are different types of creating multimodal models that include:

Multimodal methods can efficiently solve speech recognition or audio and emotion analysis.

The role of TinyML is to perform on-device data analytics at meagre power, typically in the mW range and below, for the always-on use of mostly battery-operated mobile devices. Recent growth in embedded ML contributed to developing ecosystems that support it.

Since these techniques work with low-energy systems like microcontrollers, microprocessors and neural accelerators, we can adopt machine learning to edge devices and perform with real-time responsiveness. This way, ML experts can achieve more with less. Benefits of developing TinyML include low latency, energy saving, reduced bandwidth and better data privacy due to keeping it on the edge devices rather than servers.

Incorporating machine learning into RPA systems aids in identifying deviations from typical rule-based processes and machine learning systems in real-time by processing incoming data. It allows insight into thighs occurring without explicit programming in every possible instance.

We can build intelligent automation systems by combining machine learning with RPA, NLP, and AI. These techniques complement one another for more innovative solutions. With the adoption of reinforcement learning, we can create systems adaptive to the ecosystem. IA is designed to efficiently handle structured and unstructured data and be compatible with any system.